Case Study: Conversation DesignHandling Unexpected Replies with Personality 😉

Problem.

How can we handle unexpected replies while highlighting our brand and bot personality? Specifically we received user feedback that the bot “doesn’t respond to all my questions” and wanted to address this.

Background.

Initially we launched a fairly linear chatbot experience with weekly stories that allows users to take different story paths. Our product improvements had focused on these individual experiences, however I wanted to understand what changes we could make to improve bot responses across ALL the experiences.

Goals.

Create universal bot responses to address unexpected user replies across multiple stories or experiences.

Focus on responses to common user replies that will have the most impact and we have the ability to implement quickly.

Write bot responses to highlight its personality and our brand.

Process.

Data Analysis

I began by looking at the user data to understand what types of replies aren’t being handled well across all stories. I pulled a general data set with all unexpected user replies (replies that receive a fallback message) across stories. However, many of these user replies seemed to be answers to bot’s questions from a specific story and should be handled with more context at the story level.

I decided to pull the data again just focusing on user replies that had a clear question, since these replies were more actionable for the bot to respond.

This data had some clear patterns and I organized the user questions into 3 categories:

Clarification Questions

One common type of questions were clarification questions, for instance:

“What?”

“What’s that?”

“How?”

These clarification questions are asking for clarification based on what the bot said in the prior story message. In the current configuration, users would receive the designated fallback message as a reply, which should provide more information for them.

Since the user receives a relevant reply, these messages are less critical. However, in the future it would be worthwhile to look at whether any particular types of questions require more clarification and need to be handled differently.

Story-specific Requests

Another type of question were user requests to explore a specific desire:

“Can we try X?”

Diving a little deeper in the data this was happening in our more popular onboarding story, so we decided to address the issue within that story instead of across stories.

General Questions

The last category included more general questions that the bot could respond to at any time without additional story context. These questions usually focused on the user wanting to learn more about the bot:

“What do you look like?”

“What should I call you?”

Looking at the data, it made the most sense to start by improving bot responses to the general questions, so we could make a larger impact, more quickly across all stories.

Grouping by User Intent

As a next step I grouped the unexpected replies around common user intents — user replies that are all trying to achieve the same outcome:

Common Chitchat

Greeting: Hey, hiya, hi there

Emotional check-in: How are you?, how’s it going?, hey, how r u?

Who Is This: Who is this? who are you?

User/Bot Information

Bot Name: What’s your name? What should I call you?

User Name: Call me X. My name is X.

Gender change: I’m a woman/man. I want to talk to a woman/man. I want to chat as two women/men.

Age: How old are you?

Appearance/Touch

Appearance: What do you look like?

Clothing: What are you wearing?

Physical Check-in: How does this feel?

Touch: What do you want me to do?

Support

Start Over: Can we start over? Can we start again?

Cost: What does it cost? Do I need to pay?

Feedback: I have feedback.

Help: I need help.

Bot Persona

Since one of our goals is to highlight the personality of the bot, I kept our bot persona in mind when drafting the initial replies. Then I worked with a copywriter to fine tune the copy and add more personality in our replies.

The Empathetic and Experienced Lover

The bot is an adventurous, thoughtful, and experienced lover. They view sex as fun, not shameful. Their language is confident and playful. They model what it’s like to be an empathetic and empowered lover who inquires about their partner’s needs and models how to share their own desires.

Technical Implementation

I provided this new intent list to our NLU developer so they could start training the bot to understand these intents.

I also discussed the current plan with the rest of the engineering team. We had already implemented some universal replies across stories, but usually this involved leaving the current flow already together. For instance, if a user says “Stop” then we trigger a flow alerting the user they have unsubscribed (based on SMS rules) and let them know how they can sign up again.

However, for these new replies we don’t want the user to exit the story flow permanently. They should be able to continue after one bot reply or a short dialogue flow. I discussed this feature with our engineering team. Some technical changes were required and they were able to start work on this in parallel to the NLU and copy editing work.

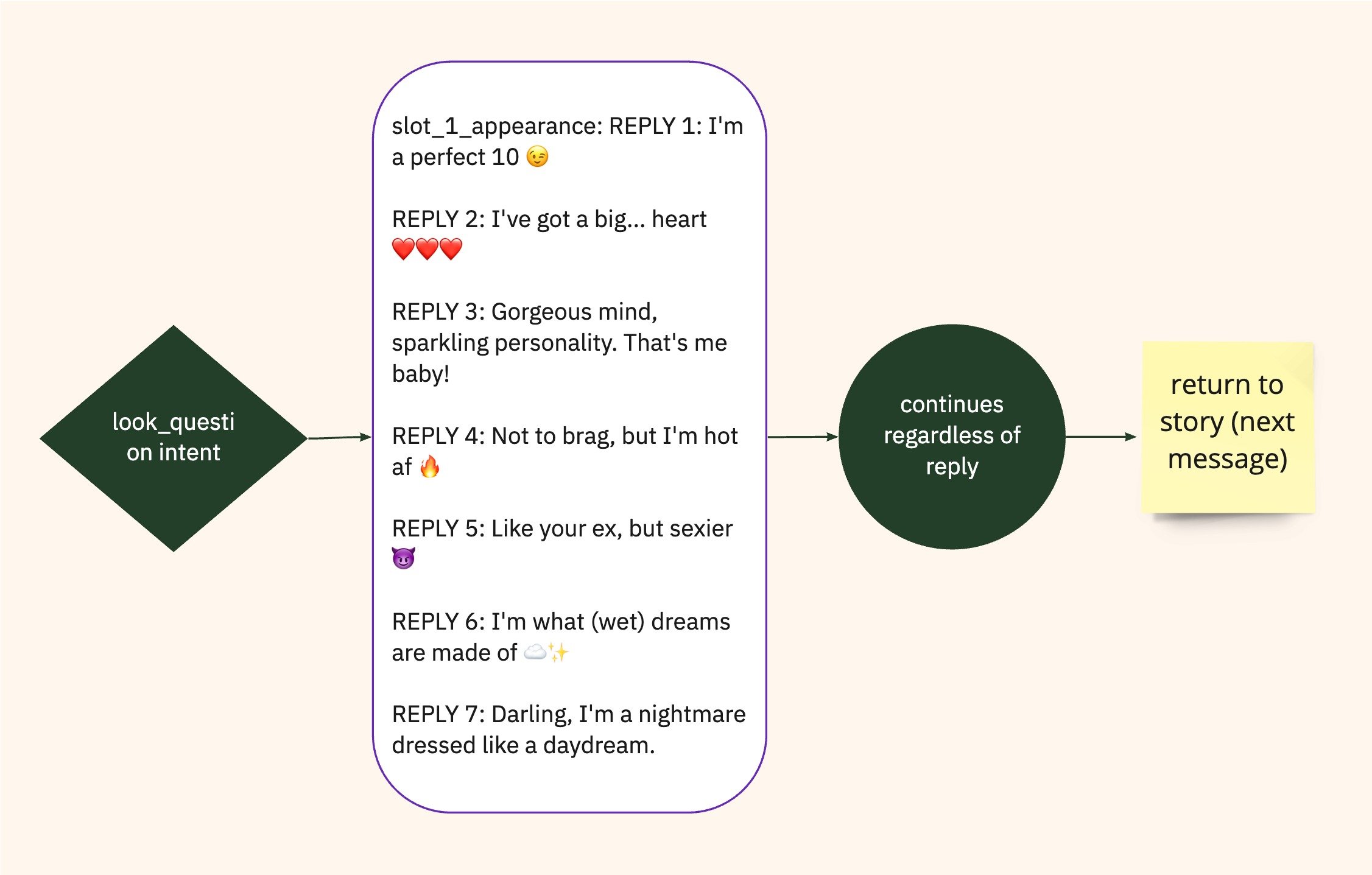

Single Reply Message Paths

For simplicity, I decided to start with single message replies when possible. If a user asks “what do you look like?” the bot would reply with 1 of 7 possible messages.

I included multiple so the user is likely to receive a different response if they ask about appearance more than once.

Since the bot is designed focused on sexual wellness language (instead of visual imagery) to teach people who to communicate, we wanted to keep the bot’s appearance ambiguous.

We used the playful personality of the bot to make the replies fun and cheeky, while being vague.

Multi-reply Message Paths

Even though we tried to keep some of the responses down to a single message. There were other cases where it made more sense to have a flow with multiple messages before returning to the story.

In this name change flow, we added a confirmation message to confirm the bot understood the name correctly before moving on.

Testing and Feedback Loops

I decided to implement these new alternative paths for just one story first to identify any major issues before releasing this update across stories. We tested this change internally and then with a group of beta users. Some intents weren’t triggering at the right time and our NLU developer was able to adjust the training data until this was working.

To monitor success across stories would be more difficult, so I worked with one of our engineers to edit our existing reports to monitor the use of these new intents and fallback data across all stories. Using these reports, we identified some places where the bot asked similar questions directly in the existing story framework and decided to exclude these alternative paths from triggering in those locations.

Results.

We reduced the number of unhandled replies significantly and now answer all the most common general questions about the bot. Though some users wish our replies were more specific, most have enjoyed the playful responses and how we’ve highlighted our brand values and voice.

A New Framework

This new approach to handling some replies outside the story framework has allowed us to simplify our story templates that used to account for some of these responses. This has reduced the complexity of our stories and will be a model for the future as we continue to optimize the product.

Prior to this project we reviewed story-specific user data to improve each story. However as we standardize our story building process, we can use more universal monitoring and reporting to improve the experience at all levels.